Western tactical neutron bombs were disarmed after Russian propaganda lie. Russia now has over 2000 ... a reposting of the 2022 post with improvements & revisions NUKEGATE (updated: 2024)

(For the essential so-called "overkill" background or Sir Slim's "the more you use, fewer you lose" success formula for winning in Burma against Japan - where physicist Herman Kahn served while his friend Sam Cohen was calculating nuclear weapon efficiencies at the Los Alamos Manhattan Project, which again used "overkill" to convince the opponent to throw in the towel - please see my post on the practicalities of really DETERRING WWIII linked here.)

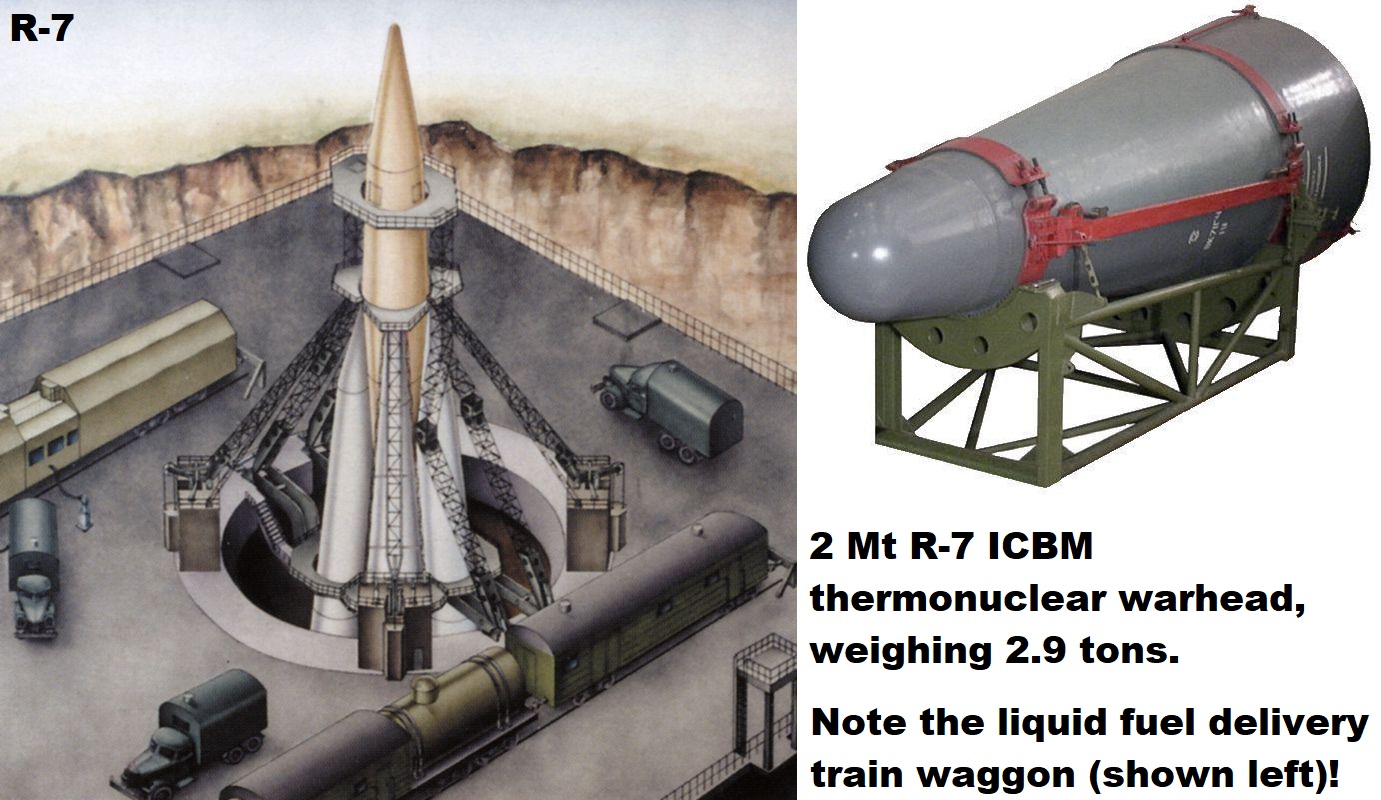

ABOVE: Russian 1985 1st Cold War SLBM first strike plan. The initial use of Russian SLBM launched nuclear missiles from off-coast against command and control centres (i.e. nuclear explosions to destroy warning satellite communications centres by radiation on satellites as well as EMP against ground targets, rather than missiles launched from Russia against cities, as assumed by 100% of the Cold War left-wing propaganda) is allegedly a Russian "fog of war" strategy. Such a "demonstration strike" is aimed essentially at causing confusion about what is going on, who is responsible - it is not quick or easy to finger-print high altitude bursts fired by SLBM's from submerged submarines to a particular country because you don't get fallout samples to identify isotopic plutonium composition. Russia could immediately deny the attack (implying, probably to the applause of the left-wingers that this was some kind of American training exercise or computer based nuclear weapons "accident", similar to those depicted in numerous anti-nuclear Cold War propaganda films). Thinly-veiled ultimatums and blackmail follow. America would not lose its population or even key cities in such a first strike (contrary to left-wing propaganda fiction), as with Pearl Harbor in 1941; it would lose its complacency and its sense of security through isolationism, and would either be forced into a humiliating defeat or a major war.

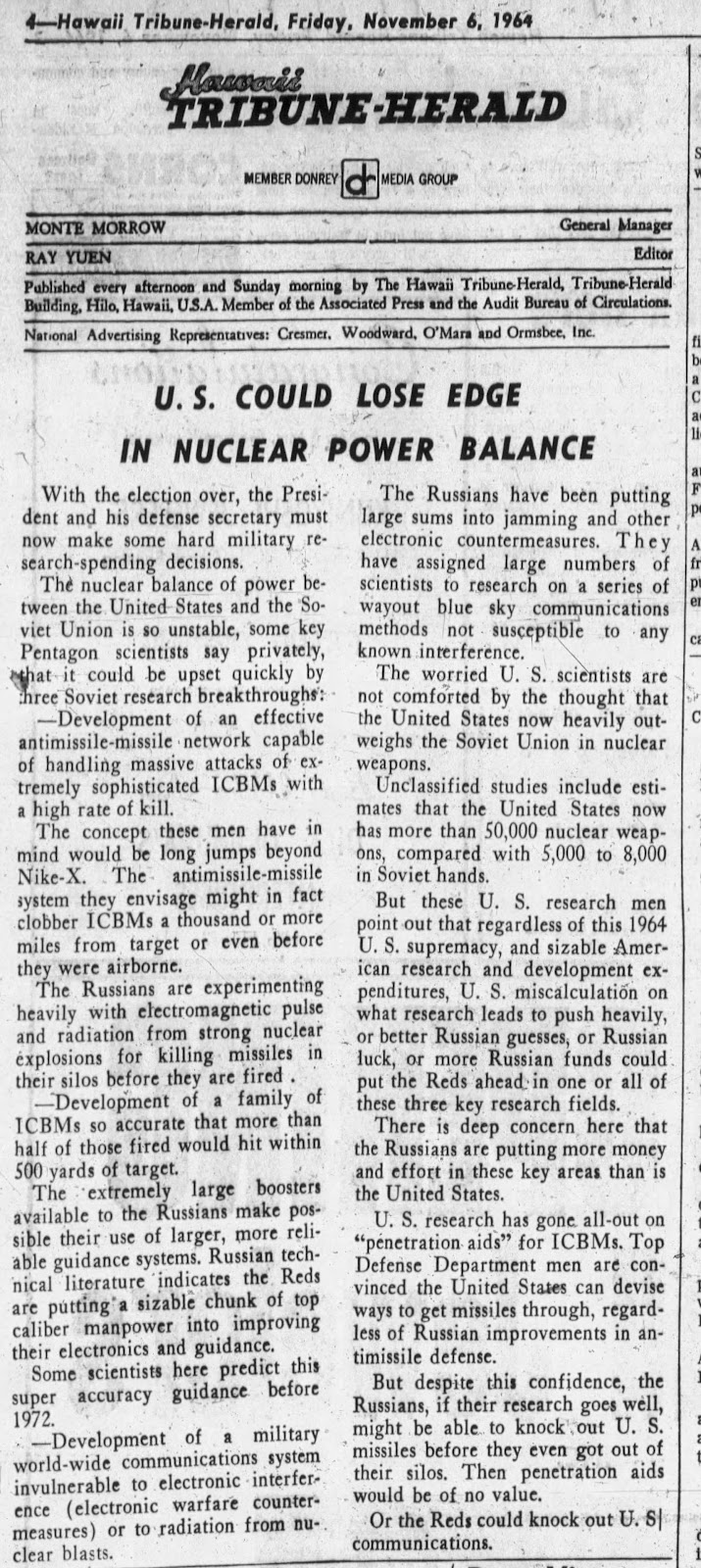

Before 1941, many warned of the risks but were dismissed on the basis that Japan was a smaller country with a smaller economy than the USA and war was therefore absurd (similar to the way Churchill's warnings about European dictators were dismissed by "arms-race opposing pacifists" not only in the 1930s, but even before WWI; for example Professor Cyril Joad documents in the 1939 book "Why War?" his first hand witnessing of Winston Churchill's pre-WWI warning and call for an arms-race to deter that war by the sneering Norman Angell). It is vital to note that there is an immense pressure against warnings of Russian nuclear superiority even today, most of it contradictory. E.g. the left wing (Russian biased) "experts" whose voices are the only ones reported in the Western media (traditionally led by "Scientific American" and "Bulletin of the Atomic Scientists"), simultaneously claim Russia imposes such a complex SLBM and ICBM threat that we must disarm now, while also claiming that their tactical nuclear weapons probably won't work so aren't a threat! In similar vein, Teller-critic Hans Bethe also used to falsely "dismiss" Russian nuclear superiority by claiming (with any more evidence than Brezhnev's word, it appeared) that Russian delivery systems are "less accurate" than Western missiles (as if accuracy has anything to do with high altitude EMP strikes, where the effects cover thousands of miles radii). Such claims would then by repeatedly endlessly in the Western media by Russian biased "journalists" or agents of influence, and any attempt to point out the propaganda would turn into a "Reds under beds" argument, designed to imply that the truth is dangerous to "peaceful coexistence"!

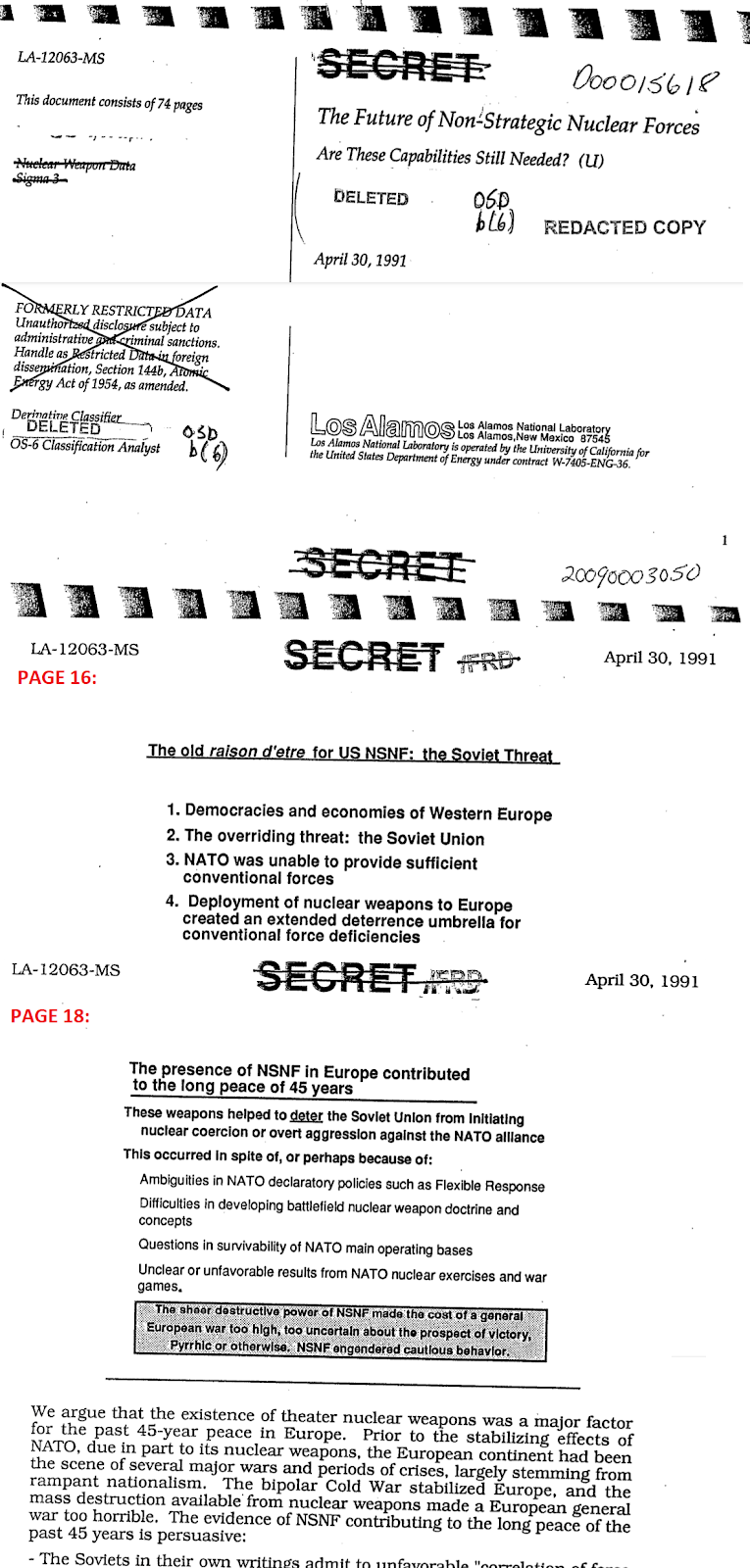

The Top Secret American intelligency report NIE 11-3/8-74 "Soviet Forces for Intercontinental Conflict" warned on page 6: "the USSR has largely eliminated previous US quantitative advantages in strategic offensive forces." page 9 of the report estimated that the Russian's ICBM and SLBM launchers exceed the USAs 1,700 during 1970, while Russia's on-line missile throw weight had exceeded the USA's one thousand tons back in 1967! Because the USA had more long-range bombers which can carry high-yield bombs than Russia (bombers are more vulnerable to air defences so were not Russia's priority), it took a little longer for Russia to exceed the USA in equivalent megatons, but the 1976 Top Secret American report NIE 11-3/8-76 at page 17 shows that in 1974 Russia exceeded the 4,000 equivalent-megatons payload of USA missiles and aircraft (with less vulnerability for Russia, since most of Russia's nuclear weapons were on missiles not in SAM-vulnerable aircraft), amd by 1976 Russia could deliver 7,000 tons of payload by missiles compared to just 4,000 tons on the USA side. These reports were kept secret for decades to protect the intelligence sources, but they were based on hard evidence. For example, in August 1974 the Hughes Aircraft Company used a specially designed ship (Glomar Explorer, 618 feet long, developed under a secret CIA contract) to recover nuclear weapons and their secret manuals from a Russian submarine which sank in 16,000 feet of water, while in 1976 America was able to take apart the electronics systems in a state-of-the-art Russian MIG-25 fighter which was flown to Japan by defector Viktor Belenko, discovering that it used exclusively EMP-hard miniature vacuum tubes with no EMP-vulnerable solid state components.

Update: Lawrence Livermore National Laboratory's $3.5 billion National Ignition Facility, NIF, using ultraviolet wavelength laser beam pulses of 2MJ on to a 2mm diameter spherical beryllium shell of frozen D+T inside a 1 cm-long hollow gold cylinder "hohlraum" (which is heated to a temperature where it then re-radiates energy at much higher frequency, x-rays, on to the surface of the beryllium ablator of the central fusion capsule, which ablates causing it to recoil inward (as for the 1962 Ripple II nuclear weapon's secondary stage, the capsule is compressed by a factor of 35, mimicking the isentropic compression mechanism of a miniature Ripple II clean nuclear weapon secondary stage), has now repeatedly achieved nuclear fusion explosions of over 3MJ, equivalent to nearly 1 kg of TNT explosive. According to a Time article (linked her) about fusion system designer Annie Kritcher, the recent breakthrough was in part due to using a ramping input energy waveform: "success that came thanks to tweaks including shifting more of the input energy to the later part of the laser shot", a feature that minimises the rise in entropy due to shock shock wave generation (which heats the capsule, causing it to expand and resist compression) and increases isentropic compression which was the principle used by LLNL's J. H. Nuckolls to achieve the 99.9% clean Ripple II 9.96 megaton nuclear test success in Dominic-Housatonic on 30 October 1962. Nuckolls in 1972 published the equation for the idealized input power waveform required for isentropic, optimized compression of fusion fuel (Nature, v239, p139): P ~ (1 - t)-1.875, where t is time in units of the transit time (the time taken for the shock to travel to the centre of the fusion capsule), and -1.875 a constant based on the specific heat of the ionized fuel (Nuckolls has provided the basic declassified principles, see extract linked here).

In a fission primary, speed of compression is of the essence in order to beat pre-initiation (where a stray spontaneous fission neutron from Pu-240 or Pu-242 impurities sets off the chain reaction before maximum compression is obtained, reducing the yield). But this danger is not possible when compressing a 100% fusion capsule, where speed is not the key, and fusion is automatically initiated when the compression squeezes the D+T to an adequate density. Provided that the compression mechanism is efficient for fusion, time is not of the essence. The fusion burn efficiency is a function of the compression attained, rather than the speed of implosion. To be clear, the energy reliably released by the 2mm diameter capsule of fusion fuel was roughly a 1 kg TNT explosion. 80% of this is in the form of 14.1 MeV neutrons (ideal for fissioning lithium-7 in LiD to yield more tritium), and 20% is the kinetic energy of fused nuclei (which is quickly converted into x-rays radiation energy by collisions). If this neutron and x-ray energy can be coupled by a scattering container into a small second stage of Li6D, it will be possible to set off a multiplicative chain reaction of fusion capsule stages, rapidly increasing the total fusion energy release. Nuckolls' 9.96 megaton Housatonic (10 kt Kinglet primary and 9.95 Mt Ripple II 100% clean isentropically compressed secondary) of 1962 proved that it is possible to use multiplicative staging whereby lower yield primary nuclear explosions trigger off a fusion stage 1,000 times more powerful than its initiator. OK, the first laser fusion ignitions last year only multiplied the supplied input energy by a factor that's closer to 2 than to the impressive 1,000 of the 1962 Ripple II nuclear warhead, but the problems and solutions are known and the whole basis of NIF for practical fusion power is improve this technology. (The first computers were far more than 1,000 times as bulky and less efficient than those available after a few years of very hard-graft research and development.) Even if initial-stage multiplications are far lower than 1,000, you can simply add more small stages to get around this (they are tiny and won't take up a huge volume or mass in the warhead). The later stages will definitely have less asymmetry problems (as in Ripple II), and we know from the Ripple II test back in 1962 that you can definitely multiply by a factor of 1,000 to get from 10 kilotons to 10 megatons in a single multiplicative stage! Another key factor, as shown on our graph linked here, is that you can use cheap natural LiD as fuel once you have a successful D+T reaction, because naturally abundant, cheap Li-7 more readily fissions to yield tritium with the 14.1 MeV neutrons from D+T fusion, than expensively enriched Li-6, which is needed to make tritium in nuclear reactors where the fission neutron energy of around 1 MeV is too low to to fission Li-7.

It should also be noted that despite an openly published paper about Nuckolls' Ripple II success being stymied in 2021 by Jon Grams, the subject is still being covered up/ignored by the anti-nuclear biased Western media! Grams article fails to contain the design details such as the isentropic power delivery curve etc from Nuckolls' declassified articles that we include in the latest blog post here. One problem regarding "data" causing continuing confusion about the Dominic-Housatonic 30 October 1962 Ripple II test at Christmas Island, is made clear in the DASA-1211 report's declassified summary of the sizes, weights and yields of those tests: Housatonic was Nuckolls' fourth and final isentropic test, with the nuclear system inserted into a heavy steel Mk36 drop case, making the overall size 57.2 inches in diameter, 147.9 long and 7,139.55 lb mass, i.e. 1.4 kt/lb or 3.0 kt/kg yield-to-mass ratio for 9.96 Mt yield, which is not impressive for that yield range until you consider (a) that it was 99.9% fusion and (b) the isentropic design required a heavy holhraum around the large Ripple II fusion secondary stage to confine x-rays for relatively long time during which a slowly rising pulse of x-rays were delivered from the primary to secondary via a very large areas of foam elsewhere in the weapon, to produce isentropic compression. Additionally, the test was made in a hurry before an atmospheric teat ban treaty, and this rushed use of a standard air drop steel casing made the tested weapon much heavier than a properly weaponized Ripple II. The key point is that a 10 kt fission device set off a ~10 Mt fusion explosion, a very clean deterrent. Applying this Ripple II 1,000-factor multiplicative staging figure directly to this technology for clean nuclear warheads, a 0.5 kg TNT D+T fusion capsule would set off a 0.5 ton TNT 2nd stage of LiD, which would then set off a 0.5 kt 3rd stage "neutron bomb", which could then be used to set off a 500 kt 4th stage or "strategic nuclear weapon". It is therefore now possible not just in principle but in practice, using suitable already-proved technical staging systems used in 1960s nuclear weapon tests successfully, to design 100% clean fusion nuclear warheads! Yes, the details have been worked out, yes the technology has been tested in piecemeal fashion. All that is now needed is a new, but quicker and cheaper, Star Wars program or Manhattan Project style effort to pull the components together. This will constitute a major leap forward in the credibility of the deterrence of aggressors.

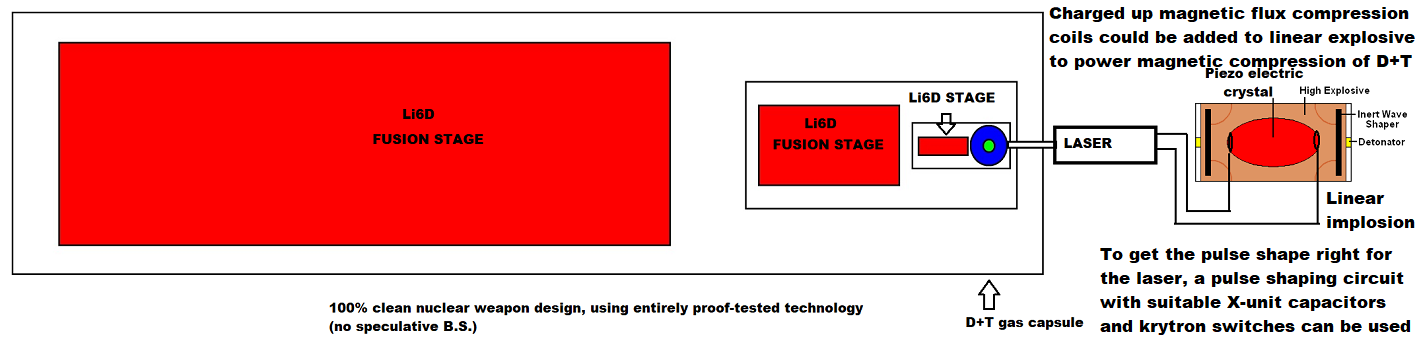

ABOVE: as predicted, the higher the x-ray input laser pulse for the D+T initiator of a clean multiplicatively-staged nuclear deterrent, the lower the effect of plasma instabilities and asymmetries and the greater the fusion burn. To get ignition (where the x-ray energy injected into the fusion hohlraum by the laser is less than the energy released in the D+T fusion burn) they have had to use about 2 MJ delivered in 10 ns or so, equivalent to 0.5 kg of TNT equivalent. But for deterrent use, why use such expensive, delicate x-ray lasers? Why not just use one-shot miniaturised x-ray tubes with megavolt electron acceleration, powered a suitably ramped pulse from a chemical explosion for magnetic flux compression current generation? At 10% efficiency, you need 0.5 x 10 = 5 kg of TNT! Even at 1% efficiency, 50 kg of TNT will do. Once the D+T gas capsule's hohlraum is well over 1 cm in size, to minimise the risk of imperfections that cause asymmetries, you don't any longer need focussed laser beams to enter tiny apertures. You might even be able to integrate many miniature flash x-ray tubes (each designed to burn out when firing one pulse of a MJ or so) into a special hohlraum. Humanity urgently needs a technological arms race akin to Reagan's Star Wars project, to deter the dictators from invasions and WWIII. In the conference video above, a question was asked about the real efficiency of the enormous repeat-pulse capable laser system's efficiency (not required for a nuclear weapon whose components only require the capability to be used once, unlike lab equipment): the answer is that 300 MJ was required by the lab lasers to fire a 2 MJ pulse into the D+T capsule's x-ray hohlraum, i.e. their lasers are only 0.7% efficient! So why bother? We know - from the practical use of incoherent fission primary stage x-rays to compress and ignite fusion capsules in nuclear weapons - that you simply don't need coherent x-rays from a laser for this purpose. The sole reason they are approaching the problem with lasers is that they began their lab experiments decades ago with microscopic sized fusion capsules and for those you need a tightly focussed beam to insert energy through a tiny hohlraum aperture. But now they are finally achieving success with much larger fusion capsules (to minimise instabilities that caused the early failures), it may be time to change direction. A whole array of false "no-go theorems" can and will be raised by ignorant charlatan "authorities" against any innovation; this is the nature of the political world. There is some interesting discussion of why clean bombs aren't in existence today, basically the idealized theory (which works fine for big H-bombs but ignores small-scale asymmetry problems which are important only at low ignition energy) understimated the input energy required for fusion ignition by a factor of 2000:

"The early calculations on ICF (inertial-confinement fusion) by John Nuckolls in 1972 had estimated that ICF might be achieved with a driver energy as low as 1 kJ. ... In order to provide reliable experimental data on the minimum energy required for ignition, a series of secret experiments—known as Halite at Livermore and Centurion at Los Alamos—was carried out at the nuclear weapons test site in Nevada between 1978 and 1988. The experiments used small underground nuclear explosions to provide X-rays of sufficiently high intensity to implode ICF capsules, simulating the manner in which they would be compressed in a hohlraum. ... the Halite/Centurion results predicted values for the required laser energy in the range 20 to 100MJ—higher than the predictions ..." - Garry McCracken and Peter Stott, Fusion, Elsevier, 2nd ed., p149.

In the final diagram above, we illustrate an example of what could very well occur in the near future, just to really poke a stick into the wheels of "orthodoxy" in nuclear weapons design: is it possible to just use a lot of (perhaps hardened for higher currents, perhaps no) pulsed current driven microwave tubes from kitchen microwave ovens, channelling their energy using waveguides (simply metal tubes, i.e. electrical Faraday cages, which reflect and thus contain microwaves) into the hohlraum, and make the pusher of dipole molecules (like common salt, NaCl) which is a good absorber of microwaves (as everybody knows from cooking in microwave ovens)? It would be extremely dangerous, not to mention embarrassing, if this worked, but nobody had done any detailed research into the possibility due to groupthink orthodoxy and conventional boxed in thinking! Remember, the D+T capsule just needs extreme compression and this can be done by any means that works. Microwave technology is now very well-established. It's no good trying to keep anything of this sort "secret" (either officially or unofficially) since as history shows, dictatorships are the places where "crackpot"-sounding ideas (such as douple-primary Project "49" Russian thermonuclear weapon designs, Russian Sputnik satellites, Russian Novichok nerve agent, Nazi V1 cruise missiles, Nazi V2 IRBM's, etc.) can be given priority by loony dictators. We have to avoid, as Edward Teller put it (in his secret commentary debunking Bethe's false history of the H-bomb, written AFTER the Teller-Ulam breakthrough), "too-narrow" thinking (which Teller said was still in force on H-bomb design even then). Fashionable hardened orthodoxy is the soft underbelly of "democracy" (a dictatorship by the majority, which is always too focussed on fashionable ideas and dismissive of alternative approaches in science and technology). Dictatorships (minorities against majorities) have repeatedly demonstrated a lack of concern for the fake "no-go theorems" used by Western anti-nuclear "authorities" to ban anything but fashionable groupthink science.

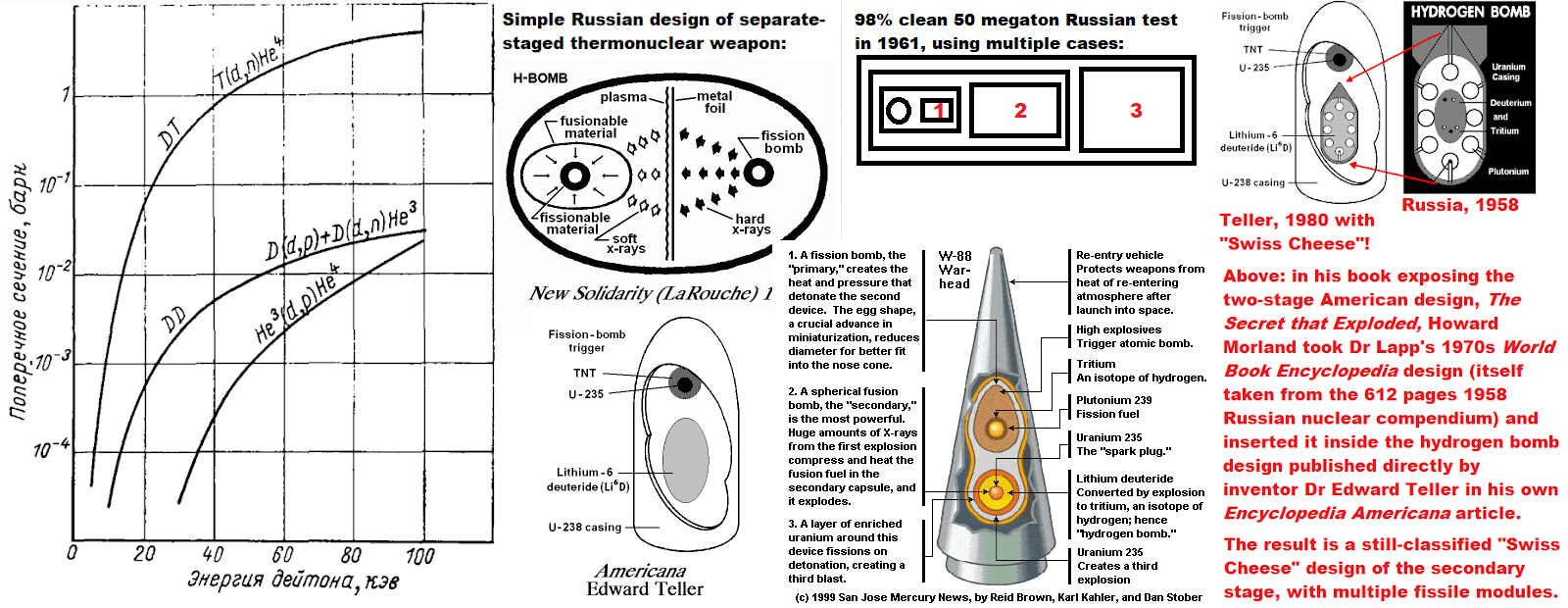

In the diagram below, it appears that the Mk17 only had a single secondary stage like the similar yield 1952 Mike design. The point here is that popular misunderstanding of the simple mechanism of x-ray energy transfer for higher yield weapons may be creating a dogmatic attitude even in secret nuclear weaponeer design labs, where orthodoxy is followed too rigorously. The Russians (see quotes on the latest blog post here) state they used two entire two-stage thermonuclear weapons with a combined yield of 1 megaton to set off their 50 megaton test in 1961. If true, you can indeed use two-stage hydrogen bombs as an "effective primary" to set off another secondary stage, of much higher yield. Can this be reversed in the sense of scaling it down so you have several bombs-within-bombs, all triggered by a really tiny first stage? In other words, can it be applied to neutron bomb design?

Update (15 Dec 2023): PDF uploaded of UK DAMAGE BY NUCLEAR WEAPONS (linked here on Internet Archive) - secret 1000 pages UK and USA nuclear weapon test effects analysis, and protective measures determined at those tests (not guesswork) relevant to escalation threats by Russia for EU invasion (linked here at wordpress) in response to Ukraine potentially joining the EU (this is now fully declassified without deletions, and in the UK National Archives at Kew):

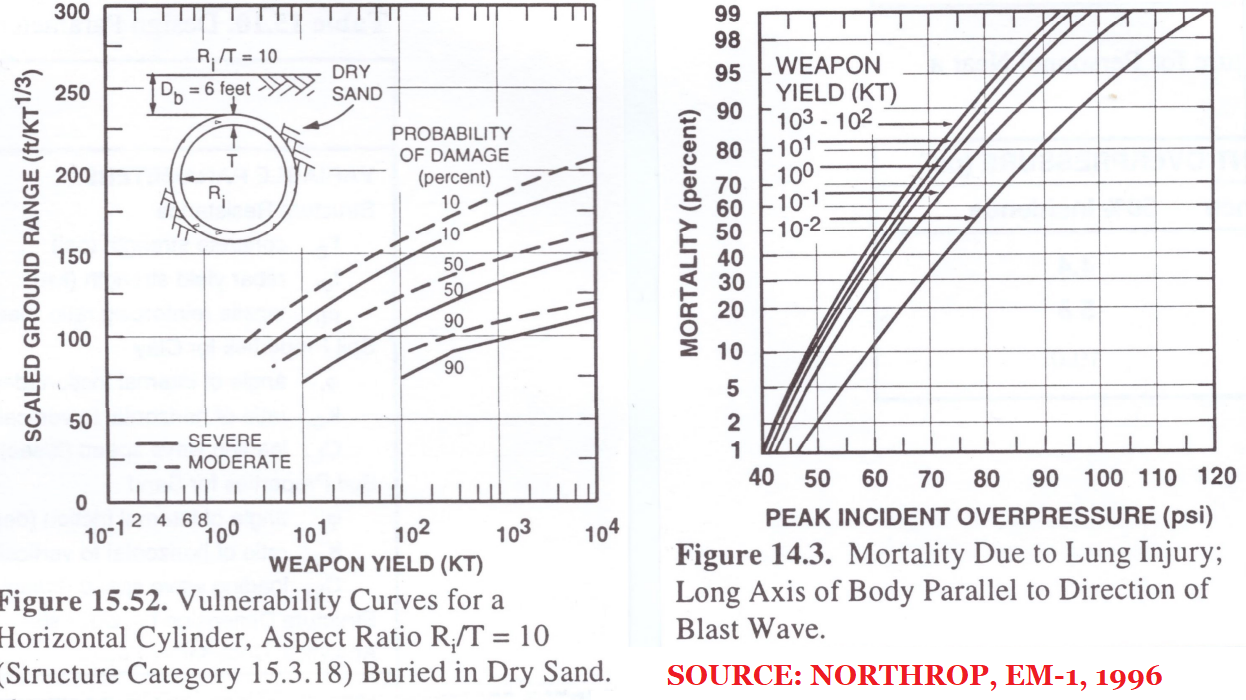

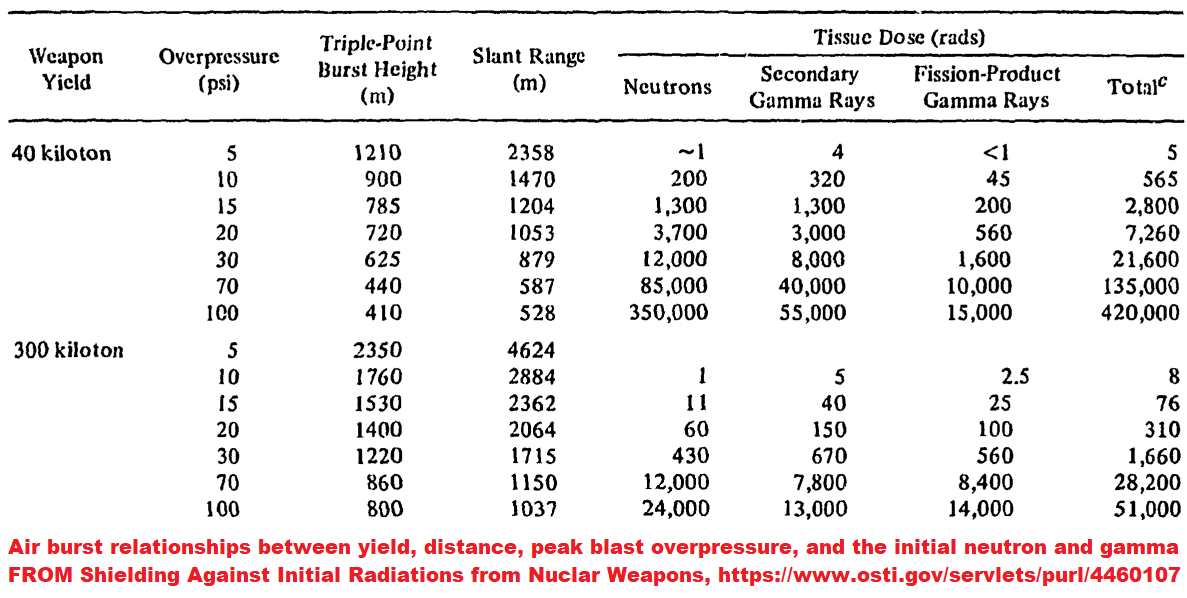

ABOVE: some pages from the originally SECRET ATOMIC 1,000 pages long UK Government Damage by Nuclear Weapons manual (now totally declassified without any deletions of data - unlike American manuals - in the UK National Archives, Kew), summarizing all of the effects data from 1950s British and American atmospheric nuclear weapons tests on military targets and also civil defense shelters tests, costs and safety. Below: some extracts from reports on Australian-British nuclear weapon test operations at Maralinga in 1956 and 1957, Buffalo and Antler, proved that even at 10 psi peak overpressure for the 15 kt Buffalo-1 shot, the dummy lying prone facing the blast was hardly moved due to the low cross-sectional area exposed to the blast winds, relative to standing dummies which were severely displaced and damaged. The value of trenches in protecting personnel against blast winds and radiation was also proved in tests (gamma radiation shielding of trenches had been proved at an earlier nuclear test in Australian, Operation Hurricane in 1952). (Antler report linked here; Buffalo report linked here.) All of this is still omitted from the American Glasstone and Dolan book "The Effects of Nuclear Weapons". The 1996 Northrop EM-1 (see extracts below showing protection by modern buildings and also simple shelters very close to nuclear tests; note that Northrop's entire set of damage ranges as a function of yield for underground shelters, tunnels, silos are based on two contained deep underground nuclear tests of different yield scaled to surface burst using the assumption of 5% yield ground coupling relative to the underground shots; this 5% equivalence figure appears to be an exaggeration for compact modern warheads, e.g. the paper “Comparison of Surface and Sub-Surface Nuclear Bursts,” from Steven Hatch, Sandia National Laboratories, to Jonathan Medalia, October 30, 2000, shows a 2% equivalence, e.g. Hatch shows that 1 megaton surface burst produces identical ranges to underground targets as a 20 kt burst at >20m depth of burst, whereas Northrop would require 50kt) has not been openly published, despite such protection being used in Russia! This proves heavy bias against credible tactical nuclear deterrence of the invasions that trigger major wars that could escalate into nuclear war (Russia has 2000+ dedicated neutron bombs; we don't!) and against simple nuclear proof tested civil defence which makes such deterrence credible and of course is also of validity against conventional wars, severe weather, peacetime disasters, etc. The basic fact is that nuclear weapons can deter/stop invasions unlike the conventional weapons that cause mass destruction, and nuclear collateral damage is eliminated easily for nuclear weapons by using them on military targets, since at collateral damage distances all the effects are sufficiently delayed in arrival (unlike the case for the smaller areas affected by conventional weapons). As for Hitler's stockpile of 12,000 tons of tabun nerve gas, whose use was deterred by proper defences (gas masks for all civilians, as well as biological agent anthrax and mustard gas retaliation capacity), it is possible to deter escalation within a world war with a crazy terrorist if people are protected by defence and deterrence:

J. R. Oppenheimer (opposing Teller), February 1951: "It is clear that they can be used only as adjuncts in a military campaign which has some other components, and whose purpose is a military victory. They are not primarily weapons of totality or terror, but weapons used to give combat forces help they would otherwise lack. They are an integral part of military operations. Only when the atomic bomb is recognized as useful insofar as it is an integral part of military operations, will it really be of much help in the fighting of a war, rather than in warning all mankind to avert it." (Quotation: Samuel Cohen, Shame, 2nd ed., 2005, page 99.)

‘The Hungarian revolution of October and November 1956 demonstrated the difficulty faced even by a vastly superior army in attempting to dominate hostile territory. The [Soviet Union] Red Army finally had to concentrate twenty-two divisions in order to crush a practically unarmed population. ... With proper tactics, nuclear war need not be as destructive as it appears when we think of [World War II nuclear city bombing like Hiroshima]. The high casualty estimates for nuclear war are based on the assumption that the most suitable targets are those of conventional warfare: cities to interdict communications ... With cities no longer serving as key elements in the communications system of the military forces, the risks of initiating city bombing may outweigh the gains which can be achieved. ...

‘The elimination of area targets will place an upper limit on the size of weapons it will be profitable to use. Since fall-out becomes a serious problem [i.e. fallout contaminated areas which are so large that thousands of people would need to evacuate or shelter indoors for up to two weeks] only in the range of explosive power of 500 kilotons and above, it could be proposed that no weapon larger than 500 kilotons will be employed unless the enemy uses it first. Concurrently, the United States could take advantage of a new development which significantly reduces fall-out by eliminating the last stage of the fission-fusion-fission process.’

- Dr Henry Kissinger, Nuclear Weapons and Foreign Policy, Harper, New York, 1957, pp. 180-3, 228-9.

(Quoted in 2006 on this blog here.)

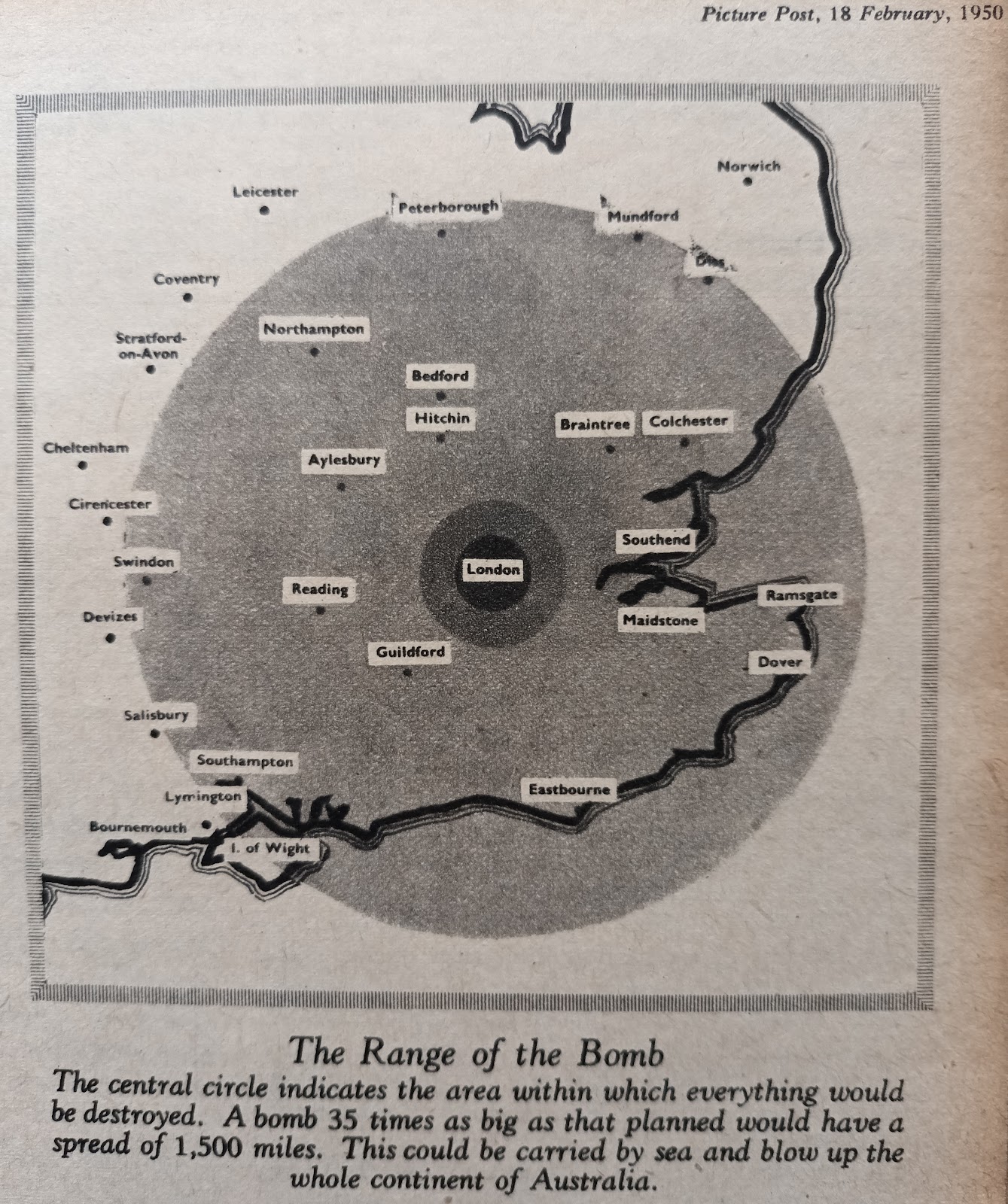

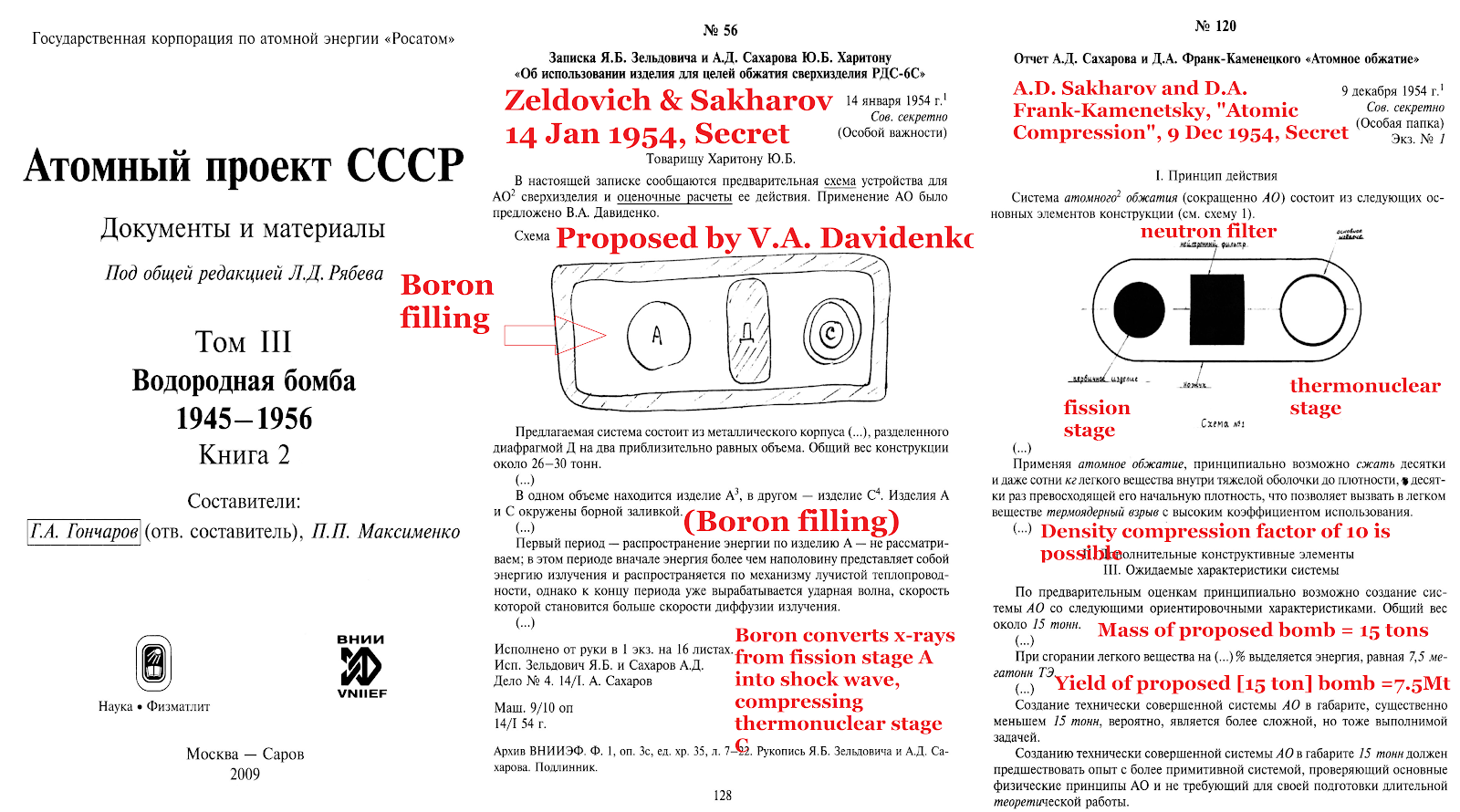

ABOVE: 16 February 1950 Daily Express editorial on H Bomb problem due to the fact that the UN is another virtue signalling but really war mongering League of Nations (which oversaw Nazi appeasement and the outbreak of WWII); however Fuchs had attended the April 1946 Super Conference during which the Russian version of the H-bomb involving isentropic radiation implosion of a separate low-density fusion stage (unlike Teller's later dense metal ablation rocket implosion secondary TX14 Alarm Clock and Sausage designs) were discussed and then given to Russia. The media was made aware only that Fuchs hade given the fission bomb to Russia. The FBI later visited Fuchs in British jail, showed him a film of Harry Gold (whom Fuchs identified as his contact while at Los Alamos) and also gave Fuchs a long list of secret reports to mark off individually so that they knew precisely what Stalin had been given. Truman didn't order H-bomb research and development because Fuchs gave Stalin the A-bomb, but because he gave them the H-bomb. The details of the Russian H-bomb are still being covered up by those who want a repetition of 1930s appeasement, or indeed the deliberate ambiguity of the UK Cabinet in 1914 which made it unclear what the UK would do if Germany invaded Belgium, allowing the enemy to exploit that ambiguity, starting a world war. The key fact usually covered up (Richard Rhodes, Chuck Hansen, and the whole American "expert nuclear arms community" all misleadingly claim that Teller's Sausage H-bomb design with a single primary and a dense ablator around a cylindrical secondary stage - uranium, lead or tungsten - is the "hydrogen bomb design") here is that two attendees of the April 1946 Super Conference, the report author Egon Bretscher and the radiation implosion discoverer Klaus Fuchs - were British, and both contributed key H-bomb design principles to the Russian and British weapons (discarded for years by America). Egon Bretscher for example wrote up the Super Conference report, during which attendees suggested various ways to try to achieve isentropic compression of low-density fusion fuel (a concept discarded by Teller's 1951 Sausage design, but used by Russia and re-developed in America on Nuckolls 1962 Ripple tests), and after Teller left Los Alamos, Bretscher took over work on Teller's Alarm Clock layered fission-fusion spherical hybrid device before Bretscher himself left Los Alamos and became head of nuclear physics at Harwell, UK,, submitting UK report together with Fuchs (head of theoretical physics at Harwell) which led to Sir James Chadwick's UK paper on a three-stage thermonuclear Super bomb which formed the basis of Penney's work at the UK Atomic Weapons Research Establishment. While Bretscher had worked on Teller's hybrid Alarm Clock (which originated two months after Fuchs left Los Alamos), Fuchs co-authored a hydrogen bomb patent with John von Neumann, in which radiation implosion and ionization implosion was used. Between them, Bretscher and Fuchs had all the key ingredients. Fuchs leaked them to Russia and the problem persists today in international relations.

There are four ways of dealing with aggressors: conquest (fight them), intimidation (deter them), fortification (shelter against their attacks; historically used as castles, walled cities and even walled countries in the case of China's 1,100 mile long Great Wall and Hadrian's Wall, while the USA has used the Pacific and Atlantic as successful moats against invasion, at least since Britain invaded Washington D.C. back in 1812), and friendship (which if you are too weak to fight, means appeasing them, as Chamberlain shook hands with Hitler for worthless peace promises). These are not mutually exclusive: you can use combinations. If you are very strong in offensive capability and also have walls to protect you while your back is turned, you can - as Teddy Roosevelt put it (quoting a West African proverb): "Speak softly and carry a big stick." But if you are weak, speaking softly makes you a target, vulnerable to coercion. This is why we don't send troops directly to Ukraine. When elected in 1960, Kennedy introduced "flexible response" to replace Dulles' "massive retaliation", by addressing the need to deter large provocations without being forced to decide between the unwelcome options of "surrender or all-out nuclear war" (Herman Kahn called this flexible response "Type 2 Deterrence"). This was eroded by both Russian civil defense and their emerging superiority in the 1970s: a real missiles and bombers gap emerged in 1972 when the USSR reached and then exceeded the 2,200 of the USA, while in 1974 the USSR achieve parity at 3,500 equivalent megatons (then exceeded the USA), and finally today Russia has over 2,000 dedicated clean enhanced neutron tactical nuclear weapons and we have none (except low-neutron output B61 multipurpose bombs). (Robert Jastrow's 1985 book How to make nuclear Weapons obsolete was the first to have graphs showing the downward trend in nuclear weapon yields created by the development of miniaturized MIRV warheads for missiles and tactical weapons: he shows that the average size of US warheads fell from 3 megatons in 1960 to 200 kilotons in 1980, and from a total of 12,000 megatons in 1960 to 3,000 megatons in 1980.)

The term "equivalent megatons" roughly takes account of the fact that the areas of cratering, blast and radiation damage scale not linearly with energy but as something like the 2/3 power of energy release; but note that close-in cratering scales as a significantly smaller power of energy than 2/3, while blast wind drag displacement of jeeps in open desert scales as a larger power of energy than 2/3. Comparisons of equivalent megatonnage shows, for example, that WWII's 2 megatons of TNT in the form of about 20,000,000 separate conventional 100 kg (0.1 ton) explosives is equivalent to 20,000,000 x (10-7)2/3 = 431 separate 1 megaton explosions! The point is, nuclear weapons are not of a different order of magnitude to conventional warfare, because: (1) devastated areas don't scale in proportion to energy release, (2) the number of nuclear weapons is very much smaller than the number of conventional bombs dropped in conventional war, and (3) because of radiation effects like neutrons and intense EMP, it is possible to eliminate physical destruction by nuclear weapons by a combination of weapon design (e.g. very clean bombs like 99.9% fusion Dominic-Housatonic, or 95% fusion Redwing-Navajo) and burst altitude or depth for hard targets, and create a weapon that deters invasions credibly (without lying local fallout radiation hazards), something none of the biased "pacifist disarmament" lobbies (which attract Russian support) tell you! There's a big problem with propaganda here.

ILLUSTRATION: the threat of WWII and the need to deter it was massively derided by popular pacifism which tended to make "jokes" of the Nazi threat until too late (example of 1938 UK fiction on this above; Charlie Chaplin's film "The Great Dictator" is another example), so three years after the Nuremberg Laws and five years after illegal rearmament was begun by the Nazis, in the UK crowds of "pacifists" in Downing Street, London, support friendship with the top racist, dictatorial Nazis in the name of "world peace". The Prime Minister used underhand techniques to try to undermine appeasement critics like Churchill and also later to get W. E. Johns fired from both editorships of Flying (weekly) and Popular Flying (monthly) to make it appear everybody "in the know" agreed with his actions, hence the contrived "popular support" for collaborating with terrorists depicted in these photos. The same thing persists today; in the 1920s and 1930s "pacifist" was driven by claims explosions, fire and poison gas will kill everybody in a knockout blow immediately war breaks out.

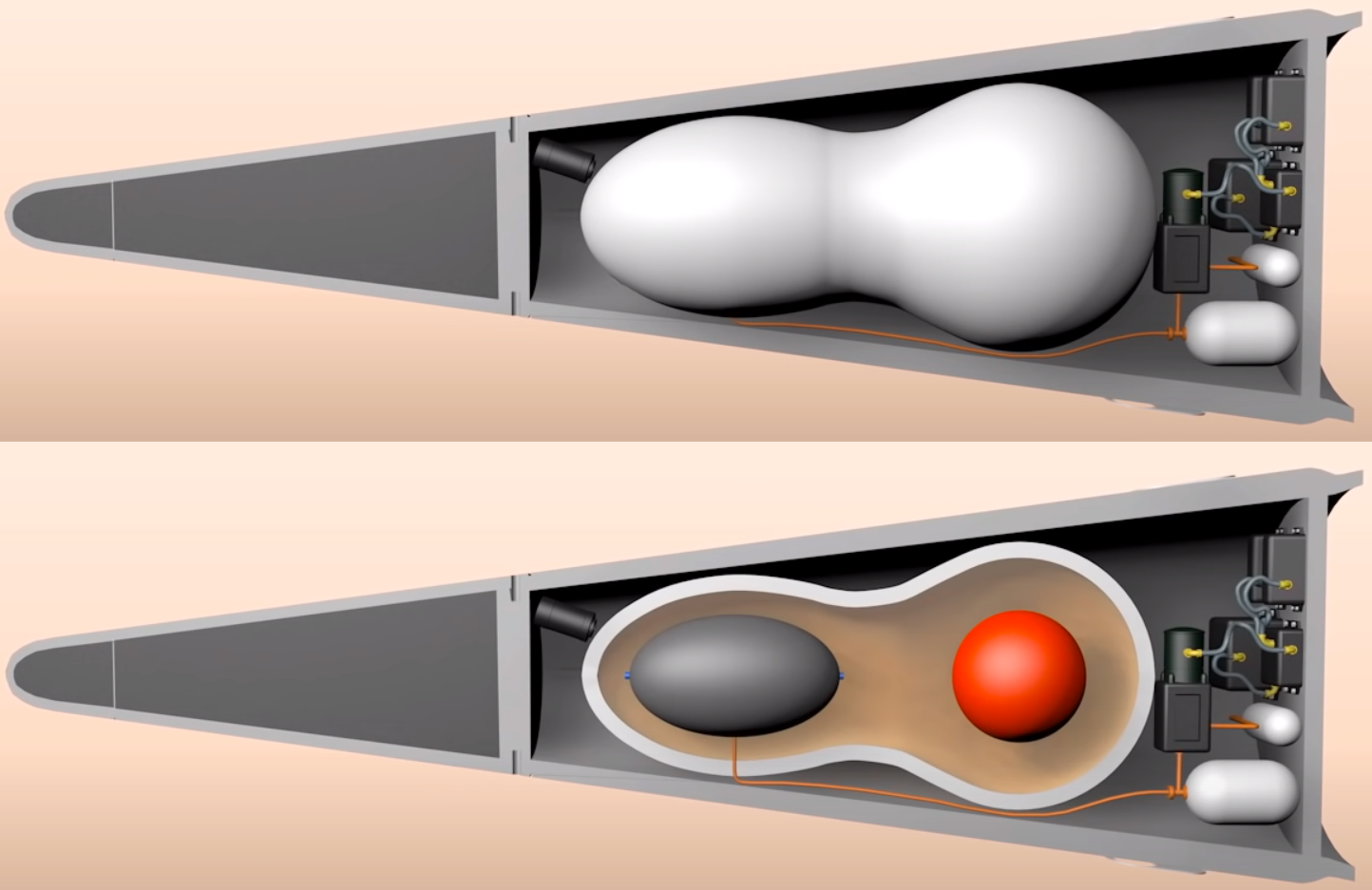

Update (30 January 2024): on the important world crisis, https://vixra.org/abs/2312.0155 gives a detailed review of "Britain and the H-bomb" (linked here), and why the "nuclear deterrence issue" isn't about "whether we should deter evil", but precisely what design of nuclear warhead we should have in order to do that cheaply, credibly, safely, and efficiently without guaranteeing either escalation or the failure of deterrence. When we disarmed our chemical and biological weapons, it was claimed that the West could easily deter those weapons using strategic nuclear weapons to bomb Moscow (which has shelters, unlike us). That failed when Putin used sarin and chlorine to prop up Assad in Syria, and Novichok in the UK to kill Dawn Sturgess in 2018. So it's just not a credible deterrent to say you will bomb Moscow if Putin invades Europe or uses his 2000 tactical nuclear weapons. An even more advanced deterrent, the 100% clean very low yield (or any yield) multiplicative staged design without any fissile material whatsoever, just around the corner. Clean secondary stages have been proof-tested successfully for example in the 100% clean Los Alamos Redwing Navajo secondary, and the 100% clean Ripple II secondary tested 30 October 1962, and the laser ignition of very tiny fusion capsules to yield more energy than supplied has been done on 5 December 2022 when a NIF test delivered 2.05 MJ (the energy of about 0.5 kg of TNT) to a fusion capsule which yielded 3.15 MJ, so all that is needed is to combine both ideas in a system whereby suitably sized second stages - ignited in the first place by a capacitative charged circuit sending a pulse of energy to a suitable laser system (the schematic shown is just a sketch of principle - more than one laser would possibly be required for reliability of fusion ignition) acting on tiny fusion capsule as shown - are encased to two-stage "effective primaries" which each become effective primaries of bigger systems, thus a geometric series of multiplicative staging until the desired yield is reached. Note that the actual tiny first T+D capsule can be compressed by one-shot lasers - compact lasers used way beyond their traditional upper power limit and burned out in a firine a single pulse - in the same way the gun assembly of the Hiroshima bomb was based on a one-shot gun. In other words, forget all about textbook gun design. The Hiroshima bomb gun assembly system only had to be fired once unlike a field artillery piece which has to be fired many thousands of times before metal fatigue sets in. Thus, by analogy, the lasers - which can be powered by ramping current pulses from magnetic flux compressor systems - for use in a clean bomb will be much smaller and lighter than current lab gear which is designed to be used thousands of times in repeated experiments. The diagram below shows cylindrical Li6D stages throughout for a compact bomb shape, but spherical stages can be used, and once a few stages get fired, the flux of 14 MeV neutrons is sufficient to go to cheap natural LiD. To fit it into a MIRV warhead, the low density of LiD constrains such a clean warhead will have a low nuclear yield, which means a tactical Cohen type neutron deterrent of the invasions that cause big wars. It should also be noted that in 1944 von Neumann suggested that T + D inside the core of the fission weapon would be compressed by "ionization compression" during fission (where a higher density ionized plasma compresses a lower density ionized plasma, i.e. the D + T plasma), an idea that was - years later - named the Internal Booster principle by Teller; see Frank Close, "Trinity", Allen Lane, London, 2019, pp158-159 where Close argues that during the April 1946 Superbomb Conference, Fuchs extended von Neumann's 1944 internal fusion boosting idea to an external D + T filled BeO walled capsule:

"Fuchs reasoned that [the very low energy, 1-10 kev, approximately 10-100 lower energy than medical] x-rays from the [physically separated] uranium explosion would reach the tamper of beryllium oxide, heat it, ionize the constituents and cause them to implode - the 'ionization implosion' concept of von Neumann but now applied to deuterium and tritium contained within beryllium oxide. To keep the radiation inside the tamper, Fuchs proposed to enclose the device inside a casing impervious to radiation. The implosion induced by the radiation would amplify the compression ... and increase the chance of the fusion bomb igniting. The key here is 'separation of the atomic charge and thermonuclear fuel, and compression of the latter by radiation travelling from the former', which constitutes 'radiation implosion'."

(This distinction between von Neumann's "ionization implosion" INSIDE the tamper, of denser tamper expanding and thus compressing lower density fusion fuel inside, and Fuchs' OUTSIDE capsule "radiation implosion", is key even today for isentropic H-bomb design; it seems Teller's key breakthroughs were not separate stages or implosion but rather radiation mirrors and ablative recoil shock compression, where radiation is used to ablate a dense pusher of Sausage designs like Mike in 1952 etc., a distinction not to be confused for the 1944 von Neumann and 1946 Fuchs implosion mechanisms! It appears Russian H-bombs used von Neumann's "ionization implosion" and Fuchs's "radiation implosion" for RDS-37 on 22 November 1955 and also in their double-primary 23 February 1958 test and subsequently, where their fusion capsules reportedly contained a BeO or other low-density outer coating, which would lead to quasi-isentropic compression, more effective for low density secondary stages than purely ablative recoil shock compression. This accounts for the continuing classification of the April 1946 Superbomb Conference (the extract of 32 pages linked here is so severely redacted that it is less helpful than the brief but very lucid summary of its technical content, in the declassified FBI compilation of reports concerning data Klaus Fuchs sent to Stalin, linked here!). Teller had all the knowledge he needed in 1946, but didn't go ahead because he made the stupid error of killing progress off by his own "no-go theorem" against compression of fusion fuel. Teller did a "theoretical" calculation in which he claimed that compression has no effect on the amount of fusion burn because the compressed system is simply scaled down in size so that the same efficiency of fusion burn occurs, albeit faster, and then stops as the fuel thermally expands. This was wrong. Teller discusses the reason for his great error in technical detail during his tape-recorded interview by Chuck Hansen at Los Alamos on 7 June 1993 (C. Hansen, Swords of Armageddon, 2nd ed., pp. II-176-7):

"Now every one of these [fusion] processes varied with the square of density. If you compress the thing, then in one unit's volume, each of the 3 important processes increased by the same factor ... Therefore, compression (seemed to be) useless. Now when ... it seemed clear that we were in trouble, then I wanted very badly to find a way out. And it occurred to be than an unprecedentedly strong compression will just not allow much energy to go into radiation. Therefore, something had to be wrong with my argument and then, you know, within minutes, I knew what must be wrong ... [energy] emission occurs when an electron and a nucleus collide. Absorption does not occur when a light quantum and a nucleus ... or ... electron collide; it occurs when a light quantum finds an electron and a nucleus together ... it does not go with the square of the density, it goes with the cube of the density." (This very costly theoretical error, wasting five years 1946-51, could have been resolved by experimental nuclear testing. There is always a risk of this in theoretical physics, which is why experiments are done to check calculations before prizes are handed out. The ban on nuclear testing is a luddite opposition to technological progress in improving deterrence.)

(This 1946-51 theoretical "no-go theorem" anti-compression error of Teller's, which was contrary to the suggestion of compression at the April 1946 superbomb conference as Teller himself refers to on 14 August 1952, and which was corrected only by comparison of the facts about compression validity in pure fission cores in Feb '51 after Ulam's argument that month for fission core compression by lens focussed primary stage shock waves, did not merely lead to Teller's dismissal of vital compression ideas. It also led to his false equations - exaggerating the cooling effect of radiation emission - causing underestimates of fusion efficiency in all theoretical calculations done of fusion until 1951! For this reason, Teller later repudiated the calculations that allegedly showed his Superbomb would fizzle; he argued that if it had been tested in 1946, the detailed data obtained - regardless of whatever happened - would have at least tested the theory which would have led to rapid progress, because the theory was wrong. The entire basis of the cooling of fusion fuel by radiation leaking out was massively exaggerated until Lawrence Livermore weaponeer John Nuckolls showed that there is a very simple solution: use baffle re-radiated, softened x-rays for isentropic compression of low-density fusion fuel, e.g. very cold 0.3 kev x-rays rather than the usual 1-10 kev cold-warm x-rays emitted directly from the fission primary. Since the radiation losses are proportional to the fourth-power of the x-ray energy or temperature, losses are virtually eliminated, allowing very efficient staging as for Nuckolls' 99.9% 10 Mt clean Ripple II, detonated on 30 October 1962 at Christmas Island. Teller's classical Superbomb was actually analyzed by John C. Solem in a 15 December 1978 report, A modern analysis of Classical Super, LA-07615, according to a Freedom of Information Act request filed by mainstream historian Alex Wellerstein, FOIA 17-00131-H, 12 June 2017; according to a list of FOIA requests at https://www.governmentattic.org/46docs/NNSAfoiaLogs_2016-2020.pdf. However, a google search for the documents Dr Wellerstein requested shows only a few at the US Gov DOE Opennet OSTI database or otherwise online yet e.g. LA-643 by Teller, On the development of Thermonuclear Bombs dated 16 Feb. 1950. The page linked here stating that report was "never classified" is mistaken! One oddity about Teller's anti-compression "no-go theorem" is that the even if fusion rates were independent of density, you would still want compression of fissile material in a secondary stage such as a radiation imploded Alarm Clock, because the whole basis of implosion fission bombs is the benefit of compression; another issue is that even if fusion rates are unaffected by density, inward compression would still help to delay the expansion of the fusion system which leads to cooling and quenching of the fusion burn.)

ABOVE: the FBI file on Klaus Fuchs contains a brief summary of the secret April 1946 Super Conference at Los Alamos which Fuchs attended, noting that compression of fusion fuel was discussed by Lansdorf during the morning session on 19 April, attended by Fuchs, and that: "Suggestions were made by various people in attendance as to the manner of minimizing the rise in entropy during compression." This fact is vitally interesting, since it proves that an effort was being made then to secure isentropic compression of low-density fusion fuel in April 1946, sixteen years before John H. Nuckolls tested the isentropically compressed Ripple II device on 30 October 1962, giving a 99.9% clean 10 megaton real H-bomb! So the Russians were given a massive head start on this isentropic compression of low-density fusion fuel for hydrogen bombs, used (according to Trutnev) in both the single primary tests like RDS-37 in November 1955 and also in the double-primary designs which were 2.5 times more efficient on a yield-to-mass basis, tested first on 23 February 1958! According to the FBI report, the key documents Fuchs gave to Russia were LA-551, Prima facie proof of the feasibility of the Super, 15 Apr 1946 and the LA-575 Report of conference on the Super, 12 June 1946. Fuchs also handed over to Russia his own secret Los Alamos reports, such as LA-325, Initiator Theory, III. Jet Formation by the Collision of Two Surfaces, 11 July 1945, Jet Formation in Cylindrical lmplosion with 16 Detonation Points, Secret, 6 February 1945, and Theory of Initiators II, Melon Seed, Secret, 6 January 1945. Note the reference to Bretscher attending the Super Conference with Fuchs; Teller in a classified 50th anniversary conference at Los Alamos on the H-bomb claimed that after he (Teller) left Los Alamos for Chicago Uni in 1946, Bretscher continued work on Teller's 31 August 1946 "Alarm Clock" nuclear weapon (precursor of the Mike sausage concept etc) at Los Alamos; it was this layered uranium and fusion fuel "Alarm Clock" concept which led to the departure of Russian H-bomb design from American H-bomb design, simply because Fuchs left Los Alamos in June 1946, well before Teller invented the Alarm Clock concept on 31 August 1946 (Teller remembered the date precisely simply because he invented the Alarm Clock on the day his daughter was born, 31 August 1946! Teller and Richtmyer also developed a variant called "Swiss Cheese", with small pockets or bubbles of expensive fusion fuels, dispersed throughout cheaper fuel, in order to kinder a more cost-effective thermonuclear reaction; this later inspired the fission and fusion boosted "spark plug" ideas in later Sausage designs; e.g. security cleared Los Alamos historian Anne Fitzpatrick stated during her 4 March 1997 interview with Robert Richtmyer, who co-invented the Alarm Clock with Teller, that the Alarm Clock evolved into the spherical secondary stage of the 6.9 megaton Castle-Union TX-14 nuclear weapon!).

In fact (see Lawrence Livermore National Laboratory nuclear warhead designer Nuckolls' explanation in report UCRL-74345): "The rates of burn, energy deposition by charged reaction products, and electron-ion heating are proportional to the density, and the inertial confinement time is proportional to the radius. ... The burn efficiency is proportional to the product of the burn rate and the inertial confinement time ...", i.e. the fusion burn rate is directly proportional to the fuel density, which in turn is of course inversely proportional to the cube of its radius. But the inertial confinement time for fusion to occur is proportional to the radius, so the fusion stage efficiency in a nuclear weapon is the product of the burn rate (i.e., 1/radius^3) and time (i.e., radius), so efficiency ~ radius/(radius^3) ~ 1/radius^2. Therefore, for a given fuel temperature, the total fusion burn, or the efficiency of the fusion stage, is inversely proportional to the square of the compressed radius of the fuel! (Those condemning Teller's theoretical errors or "arrogance" should be aware that he pushed hard all the time for experimental nuclear tests of his ideas, to check if they were correct, exactly the right thing to do scientifically and others who read his papers had the opportunity to point out any theoretical errors, but was rebuffed by those in power, who used a series of contrived arguments to deny progress, based upon what Harry would call "subconscious bias", if not arrogant, damning, overt bigotry against the kind of credible, overwhelming deterrence which had proved lacking a decade earlier, leading to WWII. This callousness towards human suffering in war and under dictatorship existed in some UK physicists too: Joseph Rotblat's hatred of anything to deter Russia be it civil defense or tactical neutron bombs of the West - he had no problem smiling and patting Russia's neutron bomb when visiting their labs during cosy groupthink deluded Pugwash campaigns for Russian-style "peaceful collaboration" - came from deep family communist convictions, since his brother was serving in the Red Army in 1944 when he alleged he heard General Groves declare that the bomb must deter Russia! Rotblat stated he left Los Alamos as a result. The actions of these groups are analogous to the "Cambridge Scientists Anti-War Group" in the 1930s. After Truman ordered a H-bomb, Bradbury at Los Alamos had to start a "Family Committee" because Teller had a whole "family" of H-bomb designs, ranging from the biggest, "Daddy", through various "Alarm Clocks", all the way down to small internally-boosted fission tactical weapons. From Teller's perspective, he wasn't putting all eggs in one basket.)

Above: declassified illustration from a January 1949 secret report by the popular physics author and Los Alamos nuclear weapons design consultant George Gamow, showing his suggestion of using x-rays from both sides of a cylindrically imploded fission device to expose two fusion capsules to x-rays to test whether compression (fusion in BeO box on right side) helps, or is unnecessary (capsule on left side). Neutron counters detect 14.1 Mev T+D neutrons using time-of-flight method (higher energy neutrons traver faster than ~1 Mev fission stage neutrons, arriving at detectors first, allowing discrimination of the neutron energy spectrum by time of arrival). It took over two years to actually fire this 225 kt shot (8 May 1951)! No wonder Teller was outraged. A few interesting reports by Teller and also Oppenheimer's secret 1949 report opposing the H bomb project as it then stood on the grounds of low damage per dollar - precisely the exact opposite of the "interpretation" the media and gormless fools will assert until the cows come home - are linked here. The most interesting is Teller's 14 August 1952 Top Secret paper debunking Hans Bethe's propaganda, by explaining that contrary to Bethe's claims, Stalin's spy Klaus Fuch had the key "radiation implosion"- see second para on p2 - secret of the H-bomb because he attended the April 1946 Superbomb Conference which was not even attended by Bethe! It was this very fact in April 1946, noted by two British attendees of the 1946 Superbomb Conference before collaboration was ended later in the year by the 1946 Atomic Energy Act, statement that led to Sir James Cladwick's secret use of "radiation implosion" for stages 2 and 3 of his triple staged H-bomb report the next month, "The Superbomb", a still secret document that inspired Penney's original Tom/Dick/Harry staged and radiation imploded H-bomb thinking, which is summarized by security cleared official historian Arnold's Britain and the H-Bomb. Teller's 24 March 1951 letter to Los Alamos director Bradbury was written just 15 days after his historic Teller-Ulam 9 March 1951 report on radiation coupling and "radiation mirrors" (i.e. plastic casing lining to re-radiate soft x-rays on to the thermonuclear stage to ablate and thus compress it), and states: "Among the tests which seem to be of importance at the present time are those concerned with boosted weapons. Another is connected vith the possibility of a heterocatalytic explosion, that is, implosion of a bomb using the energy from another, auxiliary bomb. A third concerns itself with tests on mixing during atomic explosions, which question is of particular importance in connection with the Alarm Clock."

There is more to Fuchs' influence on the UK H-bomb than I go into that paper; Chapman Pincher alleged that Fuchs was treated with special leniency at his trial and later he was given early release in 1959 because of his contributions and help with the UK H-bomb as author of the key Fuchs-von Neumann x-ray compression mechanism patent. For example, Penney visited Fuchs in June 1952 in Stafford Prison; see pp309-310 of Frank Close's 2019 book "Trinity". Close argues that Fuchs gave Penney a vital tutorial on the H-bomb mechanism during that prison visit. That wasn't the last help, either, since the UK Controller for Atomic Energy Sir Freddie Morgan wrote Penney on 9 February 1953 that Fuchs was continuing to help. Another gem: Close gives, on p396, the story of how the FBI became suspicious of Edward Teller, after finding a man of his name teaching at the NY Communist Workers School in 1941 - the wrong Edward Teller, of course - yet Teller's wife was indeed a member of the Communist-front "League of women shoppers" in Washington, DC.

Chapman Pincher, who attended the Fuchs trial, writes about Fuchs hydrogen bomb lectures to prisoners in chapter 19 of his 2014 autobiography, Dangerous to know (Biteback, London, pp217-8): "... Donald Hume ... in prison had become a close friend of Fuchs ... Hume had repaid Fuchs' friendship by organising the smuggling in of new scientific books ... Hume had a mass of notes ... I secured Fuchs's copious notes for a course of 17 lectures ... including how the H-bomb works, which he had given to his fellow prisoners ... My editor agreed to buy Hume's story so long as we could keep the papers as proof of its authenticity ... Fuchs was soon due for release ..."

Chapman Pincher wrote about this as the front page exclusive of the 11 June 1952 Daily Express, "Fuchs: New Sensation", the very month Penney visited Fuchs in prison to receive his H-bomb tutorial! UK media insisted this was evidence that UK security still wasn't really serious about deterring further nuclear spies, and the revelations finally culminated in the allegations that the MI5 chief 1956-65 Roger Hollis was a Russian fellow-traveller (Hollis was descended from Peter the Great, according to his elder brother Chris Hollis' 1958 book Along the Road to Frome) and GRU agent of influence, codenamed "Elli". Pincher's 2014 book, written aged 100, explains that former MI5 agent Peter Wright suspected Hollis was Elli after evidence collected by MI6 agent Stephen de Mowbray was reported to the Cabinet Secretary. Hollis is alleged to have deliberately fiddled his report of interviewing GRU defector Igor Gouzenko on 21 November 1945 in Canada. Gouzenko had exposed the spy and Groucho Marx lookalike Dr Alan Nunn May (photo below), and also a GRU spy in MI5 codenamed Elli, who used only duboks (dead letter boxes), but Gouzenko told Pincher that when Hollis interviewed him in 1945 he wrote up a lengthy false report claiming to discredit many statements by Gouzenko: "I could not understand how Hollis had written so much when he had asked me so little. The report was full of nonsense and lies. As [MI5 agent Patrick] Stewart read the report to me [during the 1972 investigation of Hollis], it became clear that it had been faked to destroy my credibility so that my information about the spy in MI5 called Elli could be ignored. I suspect that Hollis was Elli." (Source: Pincher, 2014, p320.) Christopher Andrew claimed Hollis couldn't have been GRU spy Elli because KGB defector Oleg Gordievsky suggested it was the KGB spy Leo Long (sub-agent of KGB spy Anthony Blunt). However, Gouzenko was GRU, not KGB like Long and Gordievsky! Gordievsky's claim that "Elli" was on the cover of Long's KGB file was debunked by KGB officer Oleg Tsarev, who found that Long's codename was actually Ralph! Another declassified Russian document, from General V. Merkulov to Stalin dated 24 Nov 1945, confirmed Elli was a GRU agent inside british intelligence, whose existence was betrayed by Gouzenko. In Chapter 30 of Dangerous to Know, Pincher related how he was given a Russian suitcase sized microfilm enlarger by 1959 Hollis spying eyewitness Michael J. Butt, doorman for secret communist meetings in London. According to Butt, Hollis delivered documents to Brigitte Kuczynski, younger sister of Klaus Fuchs' original handler, the notorious Sonia aka Ursula. Hollis allegedly provided Minox films to Brigitte discretely when walking through Hyde Park at 8pm after work. Brigitte gave her Russian made Minox film enlarger to Butt to dispose of, but he kept it in his loft as evidence. (Pincher later donated it to King's College.) Other more circumstantial evidence is that Hollis recruited the spy Philby, Hollis secured spy Blunt immunity from prosecution, Hollis cleared Fuchs in 1943, and MI5 allegedly destroyed Hollis' 1945 interrogation report on Gouzenko, to prevent the airing of the scandal that it was fake after checking it with Gouzenko in 1972.

It should be noted that the very small number of Russian GRU illegal agents in the UK and the very small communist party membership had a relatively large influence on nuclear policy via infiltration of unions which had block votes in the Labour Party, as well the indirect CND and "peace movement" lobbies saturating the popular press with anti-civil defence propaganda to make the nuclear deterrent totally incredible for any provocation short of a direct all-out countervalue attack. Under such pressure, UK Prime Minister Harold Wilson's government abolished the UK Civil Defence Corps, making the UK nuclear deterrent totally incredible against major provocations, in March 1968. While there was some opposition to Wilson, it was focussed on his profligate nationalisation policies which were undermining the economy and thus destabilizing military expenditure for national security. Peter Wright’s 1987 book Spycatcher and various other sources, including Daily Mirror editor Hugh Cudlipp's book Walking on Water, documented that on 8 May 1968, the Bank of England's director Cecil King, who was also Chairman of Daily Mirror newspapers, Mirror editor Cudlipp and the UK Ministry of Defence's anti-nuclear Chief Scientific Adviser Sir Solly Zuckerman, met at Lord Mountbatten's house in Kinnerton Street, London, to discuss a coup e'tat to overthrow Wilson and make Mountbatten the UK President, a new position. King's position, according to Cudlipp - quite correctly as revealed by the UK economic crises of the 1970s when the UK was effectively bankrupt - was that Wilson was setting the UK on the road to financial ruin and thus military decay. Zuckerman and Mountbatten refused to take part in a revolution, however Wilson's government was attacked by the Daily Mirror in a front page editorial by Cecil King two days later, on 10 May 1968, headlined "Enough is enough ... Mr Wilson and his Government have lost all credibility, all authority." According to Wilson's secretary Lady Falkender, Wilson was only told of the coup discussions in March 1976.

CND and the UK communist party alternatively tried to claim, in a contradictory way, that they were (a) too small in numbers to have any influence on politics, and (b) they were leading the country towards utopia via unilateral nuclear disarmament saturation propaganda about nuclear weapons annihilation (totally ignoring essential data on different nuclear weapon designs, yields, heights of burst, the "use" of a weapon as a deterrent to PREVENT an invasion of concentrated force, etc.) via the infiltrated BBC and most other media. Critics pointed out that Nazi Party membership in Germany was only 5% when Hitler became dictator in 1933, while in Russia there were only 200,000 Bolsheviks in September 1917, out of 125 million, i.e. 0.16%. Therefore, the whole threat of such dictatorships is a minority seizing power beyond it justifiable numbers, and controlling a majority which has different views. Traditional democracy itself is a dictatorship of the majority (via the ballot box, a popularity contest); minority-dictatorship by contrast is a dictatorship by the fanatically motivated minority by force and fear (coercion) to control the majority. The coercion tactics used by foreign dictators to control the press in free countries are well documented, but never publicised widely. Hitler put pressure on Nazi-critics in the UK "free press" via UK Government appeasers Halifax, Chamberlain and particularly the loathsome UK ambassador to Nazi Germany, Sir Neville Henderson, for example trying to censor or ridicule appeasement critics David Low, to fire Captain W. E. Johns (editor of both Flying and Popular Flying, which had huge circulations and attacked appeasement as a threat to national security in order to reduce rearmament expenditure), and to try to get Winston Churchill deselected. These were all sneaky "back door" pressure-on-publishers tactics, dressed up as efforts to "ease international tensions"! The same occurred during the Cold War, with personal attacks in Scientific American and Bulletin of the Atomic Scientists and by fellow travellers on Herman Kahn, Eugene Wigner, and others who warned we need civil defence to make a deterrent of large provocations credible in the eyes of an aggressor.

Chapman Pincher summarises the vast hypocritical Russian expenditure on anti-Western propaganda against the neutron bomb in Chapter 15, "The Neutron Bomb Offensive" of his 1985 book The Secret Offensive: "Such a device ... carries three major advantages over Hiroshima-type weapons, particularly for civilians caught up in a battle ... against the massed tanks which the Soviet Union would undoubtedly use ... by exploding these warheads some 100 feet or so above the massed tanks, the blast and fire ... would be greatly reduced ... the neutron weapon produces little radioactive fall-out so the long-term danger to civilians would be very much lower ... the weapon was of no value for attacking cities and the avoidance of damage to property can hardly be rated as of interest only to 'capitalists' ... As so often happens, the constant repetition of the lie had its effects on the gullible ... In August 1977, the [Russian] World Peace Council ... declared an international 'Week of action' against the neutron bomb. ... Under this propaganda Carter delayed his decision, in September ... a Sunday service being attended by Carter and his family on 16 October 1977 was disrupted by American demonstrators shouting slogans against the neutron bomb [see the 17 October 1977 Washington Post] ... Lawrence Eagleburger, when US Under Secretary of State for Political Affairs, remarked, 'We consider it probably that the Soviet campaign against the 'neutron bomb cost some $100 million'. ... Even the Politburo must have been surprised at the size of what it could regard as a Fifth Column in almost every country." [Unfortunately, Pincher himself had contributed to the anti-nuclear nonsense in his 1965 novel "Not with a bang" in which small amounts of radioactivity from nuclear fallout combine with medicine to exterminate humanity! The allure of anti-nuclear propaganda extends to all who which to sell "doomsday fiction", not just Russian dictators but mainstream media story tellers in the West. By contrast, Glasstone and Dolan's 1977 Effects of Nuclear Weapons doesn't even mention the neutron bomb, so there was no scientific and technical effort whatsoever by the West to make it a credible deterrent even in the minds of the public it had to protect from WWIII!]

This debunks fake news that Teller's and Ulam's 9 March 1951 report LAMS-1225 itself gave Los Alamos the Mike H-bomb design, ready for testing! Teller was proposing a series of nuclear tests of the basic principles, not 10Mt Ivy-Mike which was based on a report the next month by Teller alone, LA-1230, "The Sausage: a New Thermonuclear System". When you figure that, what did Ulam actually contribute to the hydrogen bomb? Nothing about implosion, compression or separate stages - all already done by von Neumann and Fuchs five years earlier - and just a lot of drivel about trying to channel material shock waves from a primary to compress another fissile core, a real dead end. What Ulam did was to kick Teller out of his self-imposed mental objection to compression devices. Everything else was Teller's; the radiation mirrors, the Sausage with its outer ablation pusher and its inner spark plug. Note also that contrary to official historian Arnold's book (which claims due to a misleading statement by Dr Corner that all the original 1946 UK copies of Superbomb Conference documentation were destroyed after being sent from AWRE Aldermaston to London between 1955-63), all the documents did exist in the AWRE TPN (theoretical physics notes, 100% of which have been perserved) and are at the UK National Archives, e.g. AWRE-TPN 5/54 is listed in National Archives discovery catalogue ref ES 10/5: "Miscellaneous super bomb notes by Klaus Fuchs", see also the 1954 report AWRE-TPN 6/54, "Implosion super bomb: substitution of U235 for plutonium" ES 10/6, the 1954 report AWRE-TPN 39/54 is "Development of the American thermonuclear bomb: implosion super bomb" ES 10/39, see also ES 10/21 "Collected notes on Fermi's super bomb lectures", ES 10/51 "Revised reconstruction of the development of the American thermonuclear bombs", ES 1/548 and ES 1/461 "Superbomb Papers", etc. Many reports are secret and retained, despite containing "obsolete" designs (although UK report titles are generally unredacted, such as: "Storage of 6kg Delta (Phase) -Plutonium Red Beard (tactical bomb) cores in ships")! It should also be noted that the Livermore Laboatory's 1958 TUBA spherical secondary with an oralloy (enriched U235) outer pusher was just a reversion from Teller's 1951 core spark plug idea in the middle of the fusion fuel, back to the 1944 von Neumann scheme of having fission material surrounding the fusion fuel. In other words, the TUBA was just a radiation and ionization imploded, internally fusion-boosted, second fission stage which could have been accomplished a decade earlier if the will existed, when all of the relevant ideas were already known. The declassified UK spherical secondary-stage alternatives linked here (tested as Grapple X, Y and Z with varying yields but similar size, since all used the 5 ft diameter Blue Danube drop casing) clearly show that a far more efficient fusion burn occurs by minimising the mass of hard-to-compress U235 (oralloy) sparkplug/pusher, but maximising the amount of lithium-7, not lithium-6. Such a secondary with minimal fissionable material also automatically has minimal neutron ABM vulnerability (i.e., "Radiation Immunity", RI). This is the current cheap Russian neutron weapon design, but not the current Western design of warheads like the W78, W88 and bomb B61.

So why on earth doesn't the West take the cheap efficient option of cutting expensive oralloy and maximising cheap natural (mostly lithium-7) LiD in the secondary? Even Glasstone's 1957 Effects of Nuclear Weapons on p17 (para 1.55) states that "Weight for weight ... fusion of deuterium nuclei would produce nearly 3 times as much energy as the fission of uranium or plutonium"! The sad answer is "density"! Natural LiD (containing 7.42% Li6 abundance) is a low density white/grey crystalline solid like salt that actually floats on water (lithium deuteroxide would be formed on exposure to water), since its density is just 820 kg/m^3. Since the ratio of mass of Li6D to Li7D is 8/9, it would be expected that the density of highly enriched 95% Li6D is 739 kg/m^3, while for 36% enriched Li6D it is 793 kg/m^3. Uranium metal has a density of 19,000 kg/m^3, i.e. 25.7 times greater than 95% enriched li6D or 24 times greater than 36% enriched Li6D. Compactness, i.e. volume is more important in a Western MIRV warhead than mass/weight! In the West, it's best to have a tiny-volume, very heavy, very expensive warhead. In Russia, cheapness outweights volume considerations. The Russians in some cases simply allowed their more bulky warheads to protrude from the missile bus (see photo below), or compensated for lower yields at the same volume using clean LiD by using the savings in costs to build more warheads. (The West doubles the fission yield/mass ratio of some warheads by using U235/oralloy pushers in place of U238, which suffers from the problem that about half the neutrons it interacts with result in non-fission capture, as explained below. Note that the 720 kiloton UK nuclear test Orange Herald device contained a hollow shell of 117 kg of U235 surrounded by a what Lorna Arnold's book quotes John Corner referring to a "very thin" layer of high explosive, and was compact, unboosted - the boosted failed to work - and gave 6.2 kt/kg of U235, whereas the first version of the 2-stage W47 Polaris warhead contained 60 kg of U235 which produced most of the secondary stage yield of about 400 kt, i.e. 6.7 kt/kg of U235. Little difference - but because perhaps 50% of the total yield of the W47 was fusion, its efficiency of use of U235 must have actually been less than the Orange Herald device, around 3 kt/kg of U235 which indicates design efficiency limits to "hydrogen bombs"! Yet anti-nuclear charlatans claimed that the Orange Herald bomb was a con!)

ABOVE: USA nuclear weapons data declassified by UK Government in 2010 (the information was originally acquired due to the 1958 UK-USA Act for Cooperation on the Uses of Atomic Energy for Mutual Defense Purposes, in exchange for UK nuclear weapons data) as published at http://nuclear-weapons.info/images/tna-ab16-4675p63.jpg. This single table summarizes all key tactical and strategic nuclear weapons secret results from 1950s testing! (In order to analyze the warhead pusher thicknesses and very basic schematics from this table it is necessary to supplement it with the 1950s warhead design data declassified in other documents, particularly some of the data from Tom Ramos and Chuck Hansen, as quoted in some detail below.) The data on the mass of special nuclear materials in each of the different weapons argues strongly that the entire load of Pu239 and U235 in the 1.1 megaton B28 was in the primary stage, so that weapon could not have had a fissile spark plug in the centre let alone a fissile ablator (unlike Teller's Sausage design of 1951), and so the B28 it appears had no need whatsoever of a beryllium neutron radiation shield to prevent pre-initiation of the secondary stage prior to its compression (on the contrary, such neutron exposure of the lithium deuteride in the secondary stage would be VITAL to produce some tritium in it prior to compression, to spark fusion when it was compressed). Arnold's book indeed explains that UK AWE physicists found the B28 to be an excellent, highly optimised, cheap design, unlike the later W47 which was extremely costly. The masses of U235 and Li6 in the W47 shows the difficulties of trying to maintain efficiency while scaling down the mass of a two-stage warhead for SLBM delivery: much larger quantities of Li6 and U235 must be used to achieve a LOWER yield! To achieve thermonuclear warheads of low mass at sub-megaton yields, both the outer bomb casing and the pusher around the the fusion fuel must be reduced:

"York ... studied the Los Alamos tests in Castle and noted most of the weight in thermonuclear devices was in their massive cases. Get rid of the case .... On June 12, 1953, York had presented a novel concept ... It radically altered the way radiative transport was used to ignite a secondary - and his concept did not require a weighty case ... they had taken the Teller-Ulam concept and turned it on its head ... the collapse time for the new device - that is, the amount of time it took for an atomic blast to compress the secondary - was favorable compared to older ones tested in Castle. Brown ... gave a female name to the new device, calling it the Linda." - Dr Tom Ramos (Lawrence Livermore National Laboratory nuclear weapon designer), From Berkeley to Berlin: How the Rad Lab Helped Avert Nuclear War, Naval Institute press, 2022, pp137-8. (So if you reduce the outer casing thickness to reduce warhead weight, you must complete the pusher ablation/compression faster, before the thinner outer casing is blown off, and stops reflecting/channelling x-rays on the secondary stage. Making the radiation channel smaller and ablative pusher thinner helps to speed up the process. Because the ablative pusher is thinner, there is relatively less blown-off debris to block the narrower radiation channel before the burn ends.)

"Brown's third warhead, the Flute, brought the Linda concept down to a smaller size. The Linda had done away with a lot of material in a standard thermonuclear warhead. Now the Flute tested how well designers could take the Linda's conceptual design to substantially reduce not only the weight but also the size of a thermonuclear warhead. ... The Flute's small size - it was the smallest thermonuclear device yet tested - became an incentive to improve codes. Characteristics marginally important in a larger device were now crucially important. For instance, the reduced size of the Flute's radiation channel could cause it to close early [with ablation blow-off debris], which would prematurely shut off the radiation flow. The code had to accurately predict if such a disaster would occur before the device was even tested ... the calculations showed changes had to be made from the Linda's design for the Flute to perform correctly." - Dr Tom Ramos (Lawrence Livermore National Laboratory nuclear weapon designer), From Berkeley to Berlin: How the Rad Lab Helped Avert Nuclear War, Naval Institute press, 2022, pp153-4. Note that the piccolo (the W47 secondary) is a half-sized flute, so it appears that the W47's secondary stage design miniaturization history was: Linda -> Flute -> Piccolo:

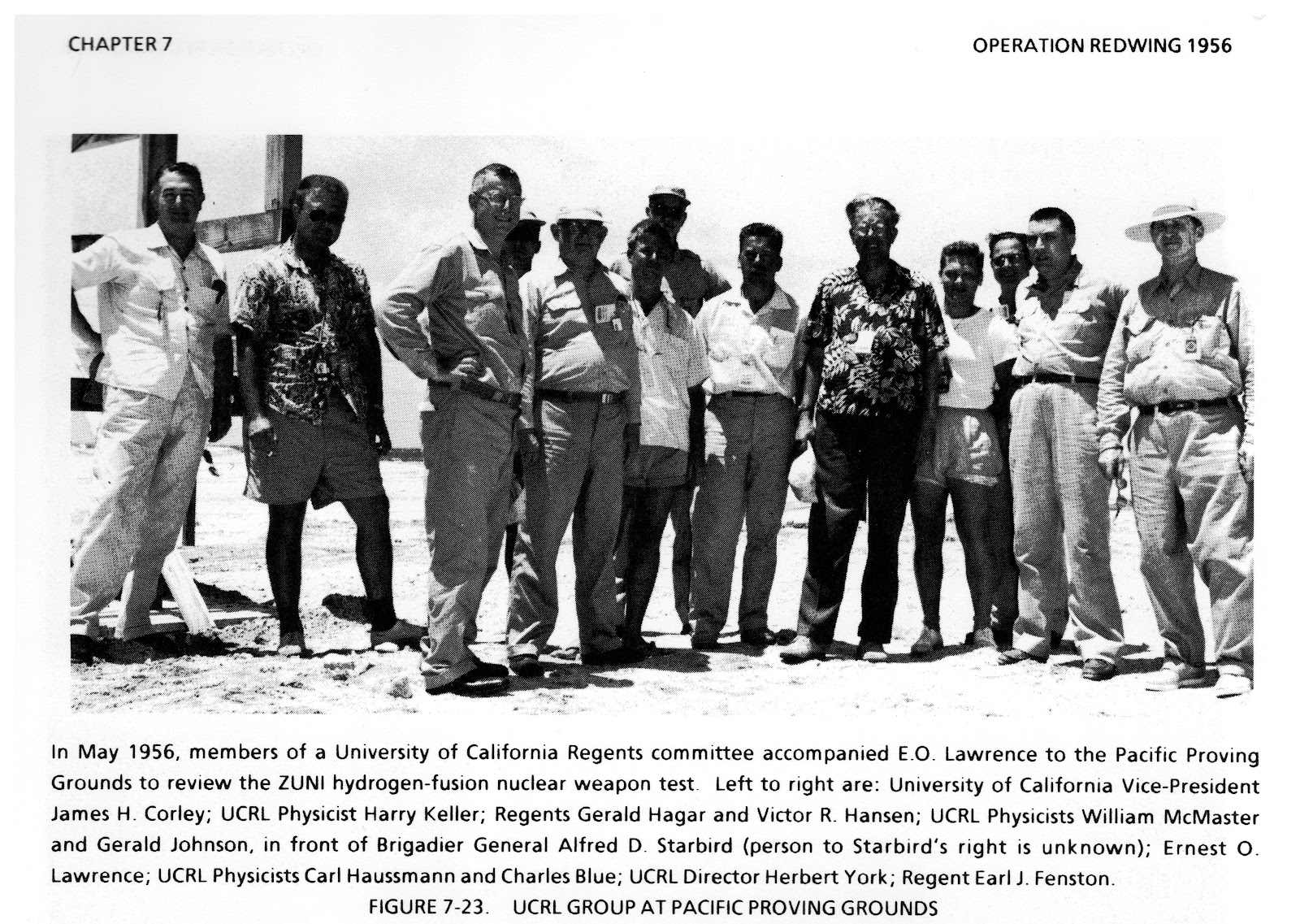

"A Division's third challenge was a small thermonuclear warhead for Polaris [the nuclear SLBM submarine that preceeded today's Trident system]. The starting point was the Flute, that revolutionary secondary that had performed so well the previous year. Its successor was called the Piccolo. For Plumbbob [Nevada, 1957], the design team tested three variations of the Piccolo as a parameter test. One of the variants outperformed the others ... which set the stage for the Hardtack [Nevada and Pacific, 1958] tests. Three additional variations for the Piccolo ... were tested then, and again an optimum candidate was selected. ... Human intuition as well as computer calculations played crucial roles ... Finally, a revolutionary device was completed and tested ... the Navy now had a viable warhead for its Polaris missile. From the time Brown gave Haussmann the assignment to develop this secondary until the time they tested the device in the Pacific, only 90 days had passed. As a parallel to the Robin atomic device, this secondary for Polaris laid the foundation for modern thermonuclear weapons in the United States." - Dr Tom Ramos (Lawrence Livermore National Laboratory nuclear weapon designer), From Berkeley to Berlin: How the Rad Lab Helped Avert Nuclear War, Naval Institute press, 2022, pp177-8. (Ramos is very useful in explaining that many of the 1950s weapons with complex non-spherical, non-cylindrical shaped primaries and secondaries were simply far too complex to fully simulate on the really pathetic computers they had - Livermore got a 4,000 vacuum tubes-based IBM 701 with 2 kB memory in 1956, AWRE Aldermaston in the Uk had to wait another year for theirs - so they instead did huge numbers of experimental explosive tests. For instance, on p173, Ramos discloses that the Swan primary which developed into the 155mm tactical shell, "went through over 100 hydrotests", non-nuclear tests in which fissile material is replaced with U238 or other substitutes, and the implosion is filmed with flash x-ray camera systems.)

"An integral feature of the W47, from the very start of the program, was the use of an enriched uranium-235 pusher around the cylindrical secondary." - Chuck Hansen, Swords 2.0, p. VI-375 (Hansen's source is his own notes taken during a 19-21 February 1992 nuclear weapons history conference he attended; if you remember the context, "Nuclear Glasnost" became fashionable after the Cold War ended, enabling Hansen to acquire almost unredacted historical materials for a few years until nuclear proliferation became a concern in Iraq, Afghanistan, Iran and North Korea). The key test of the original (Robin primary and Piccolo secondary) Livermore W47 was 412 kt Hardtack-Redwood on 28 June 1958. Since Li6D utilized at 100% efficiency would yield 66 kt/kg, the W47 fusion efficiency was only about 6%; since 100% fission of u235 yields 17 kt/kg, the W47's Piccolo fission (the u235 pusher) efficiency was about 20%; the comparable figures for secondary stage fission and fusion fuel burn efficiencies in the heavy B28 are about 7% and 15%, respectively: